Understanding alternative research metrics

Research metrics are traditionally used to quantify the impact of scholarly work. These metrics are applied at the journal and author levels. Many researchers and academics include metrics in their files when going forward for retention, tenure and promotion. The impact factor has been the research metric used most often and for a long time was the only tool used to measure impact. The impact factor is the average number of citations received by an article in a journal.

As scholarship has moved online and Open Access has gathered momentum, there is growing consensus that alternative metrics are needed. A variety of research metrics are currently used, and the number of different metrics is growing every day. Alternative metrics are being utilised, such as how often someone’s work is mentioned in the news, via blogs or on Twitter. These alternative metrics, commonly called altmetrics, are complementary to traditional, citation-based metrics. They can also include the number of downloads and online views of an article that has been posted on a website or shared through an institutional repository.

Need for altmetrics

There are a number of reasons why researchers and institutions might use alternative metrics.

- Because alternative metrics measure interactions with research works in different ways, you can often measure these interactions faster than traditional metrics such as citations. When an article is published and/or shared online, it can take time before it makes its way into other scholarly work as a citation. However, interactions with the work may happen more quickly.

- Alternative metrics can be generated for different types of scholarly output and not just for published papers. As such, these metrics provide a more holistic assessment of the impact of scholarly work precisely because they use broader indicators.

- Citation-based metrics generally measure the impact of the work on the academic and research community. In comparison, alternative metrics can capture interactions and attention that the research receives from the public and those outside the academic world.

For researchers, then, it is important to know what tools are available for generating altmetrics.

Altmetrics tools

Altmetric Explorer is a subscription-based tool that allows institutions to track activity for publications and scholarly works that are shared by individual researchers, departments or institutions. The Almetric Explorer website contains numerous resources to help guide you in your choice of alternative metrics. They also provide a video-based beginner’s guide.

Kudos is a newer online tool, founded in 2011, and developed to market research and track its impact over time. This tool can help academics maximise the visibility of their work. Kudos imports and displays a number of metrics from different sources including Altmetric and Reuters. Furthermore, Kudos is free to the user. By using Kudos, researchers can “Track performance and capture evidence of engagement and impact activities, with a range of meaningful metrics all in one place”.

PlumX Metrics is a tool provided by Elsevier. This tool captures online interactions with individual pieces of research output, such as academic articles, conference proceedings, book chapters and more in the online environment. PlumX Metrics categorises metrics into five categories: Citations, Usage, Captures, Mentions and Social Media. Their website provides a description of these categories:

- Citations: This is a category that contains both traditional citation indexes such as Scopus, as well as citations that help indicate societal impact such as Clinical or Policy Citations. Examples: citation indexes, patent citations, clinical citations, policy citations.

- Usage: This is a way to signal if anyone is reading the articles or otherwise using the research. Usage is the number one statistic researchers want to know after citations. Examples: clicks, downloads, views, library holdings, video plays.

- Captures: It indicates that someone wants to come back to the work. Captures can be a leading indicator of future citations. Examples: bookmarks, code forks, favorites, readers, watchers.

- Mentions: This is a measurement of activities such as news articles or blog posts about research. Mentions is a way to tell that people are truly engaging with the research. Examples: blog posts, comments, reviews, Wikipedia references, news media.

- Social media: This category includes the tweets, Facebook likes, etc. that reference the research. Social media can help measure “buzz” and attention. Social media can also be a good measure of how well a particular piece of research has been promoted. Examples: shares, likes, comments, tweets.

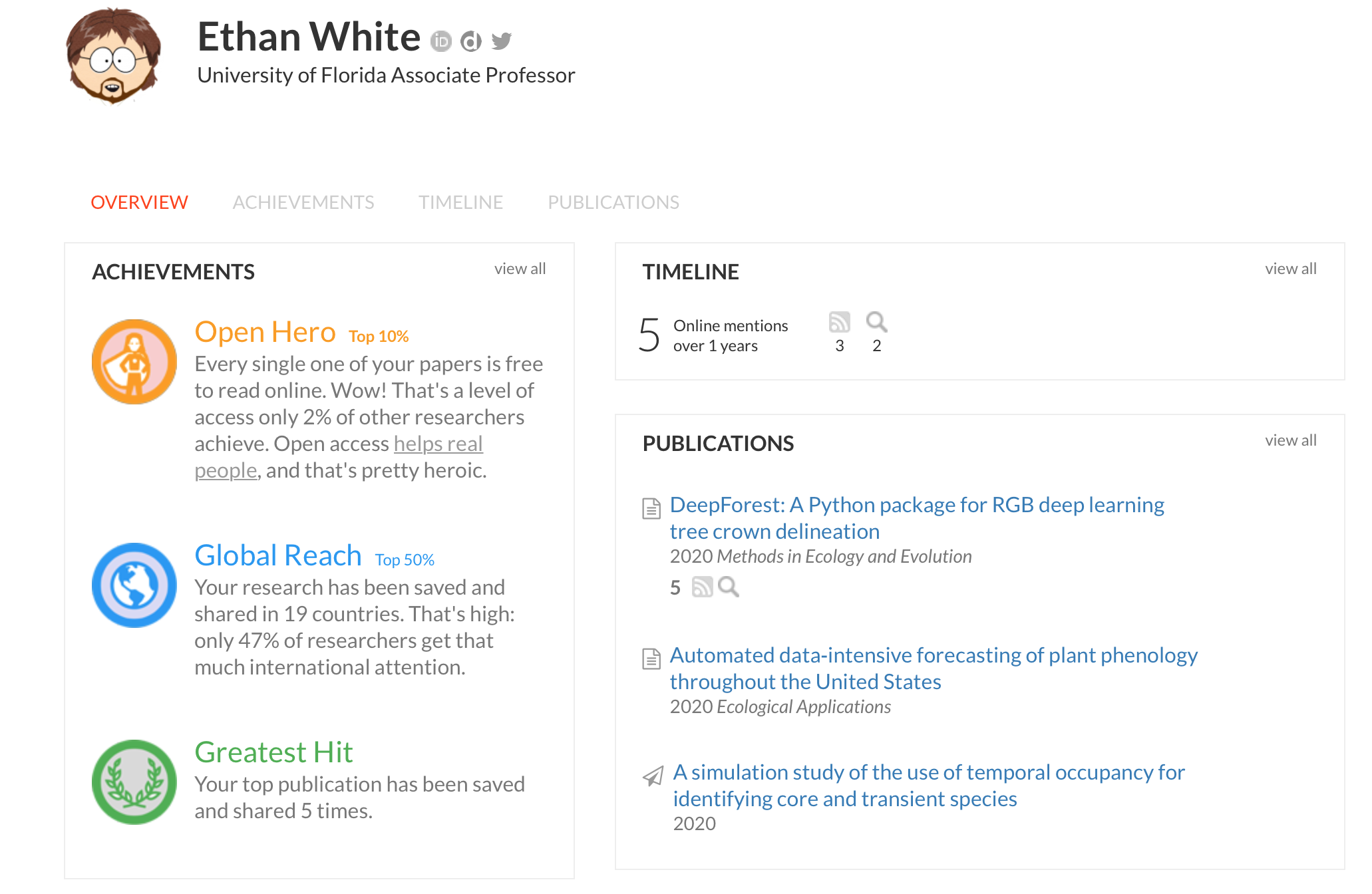

ImpactStory is an open-source, web-based tool that helps researchers explore and share the diverse impacts of all their research products – traditional ones like journal articles but also alternative products like blog posts, datasets and software. They provide a sample researcher profile using their tools.

Limitations of present altmetrics

There are some cautions and limitations to using altmetrics. Because the use of these measures is early in its development, strict standards and guidelines for their use have not yet been fully established. Some of these metrics, like many metrics, lend themselves to manipulation and do not always reflect the quality of the work itself.

Several organisations are currently developing standards to guide use of these alternative metrics. The European Commission established an expert group on alternative, or next-generation, metrics as part of the Open Science movement. In addition, a paper was published by experts in 2020 titled Open Science: Altmetrics and Rewards. The paper states:

Altmetrics have the potential to foster such a paradigmatic shift in evaluating and rewarding research activities. They can reflect a wider view on what impact is and how it is created and can thus help to break away from traditional citation-based indicators and promote innovative multiple perspectives on measuring unconventional types of research output, such as data, methods, blogs, and public engagement.

However, the use of altmetrics also raises several substantive concerns. One is that it is not yet clear what kind of qualities such altmetrics indicate. In order to learn more about the meaning of altmetrics, experimentation should be encouraged and experiences should be exchanged across countries and research communities. Another concern is that providers of altmetrics data are themselves not fully open in terms of the methods and data they employ in aggregating the data. Hence, results are hardly replicable, and their use in decision making is neither standardised nor transparent.

Summary

As the Open Science and Open Access movements grow and develop, and as more research is shared through a variety of online platforms, there is a clear need for alternative measures of impact. Researchers need to keep pace and track the impact of all of their scholarly work in new ways. Understanding how alternative metrics are being used and what tools you can access to gather these metrics is important. Look for resources and information at your institution to find out what work, committees or policies exist related to the use of alternative metrics.

Charlesworth Author Services, a trusted brand supporting the world’s leading academic publishers, institutions and authors since 1928.

To know more about our services, visit: Our Services

Visit our new Researcher Education Portal that offers articles and webinars covering all aspects of your research to publication journey! And sign up for our newsletter on the Portal to stay updated on all essential researcher knowledge and information!

Register now: Researcher Education Portal

Maximise your publication success with Charlesworth Author Services.